AI will force you to face the hidden costs of not governing your data right

Data management is hot, because use of AI has highlighted its necessity. Organisations experience that their data management practices are not sufficient to transition to AI operated business processes.

Haven’t we heard about the importance of organisational data management practices before? Yes, many times. But unlike previous times, the promotion of technological solutions offering a ‘get out of jail for free’ card is absent. Human responsibilities for data governance are highlighted this time. This is progress.

It is hard to beat old habits, though. This opinion piece centers on what I believe is a blind spot for those who are responsible for data governance in their organisations.

The key of this piece is addressing the focal point of where I think your efforts should be: in your systems of record.

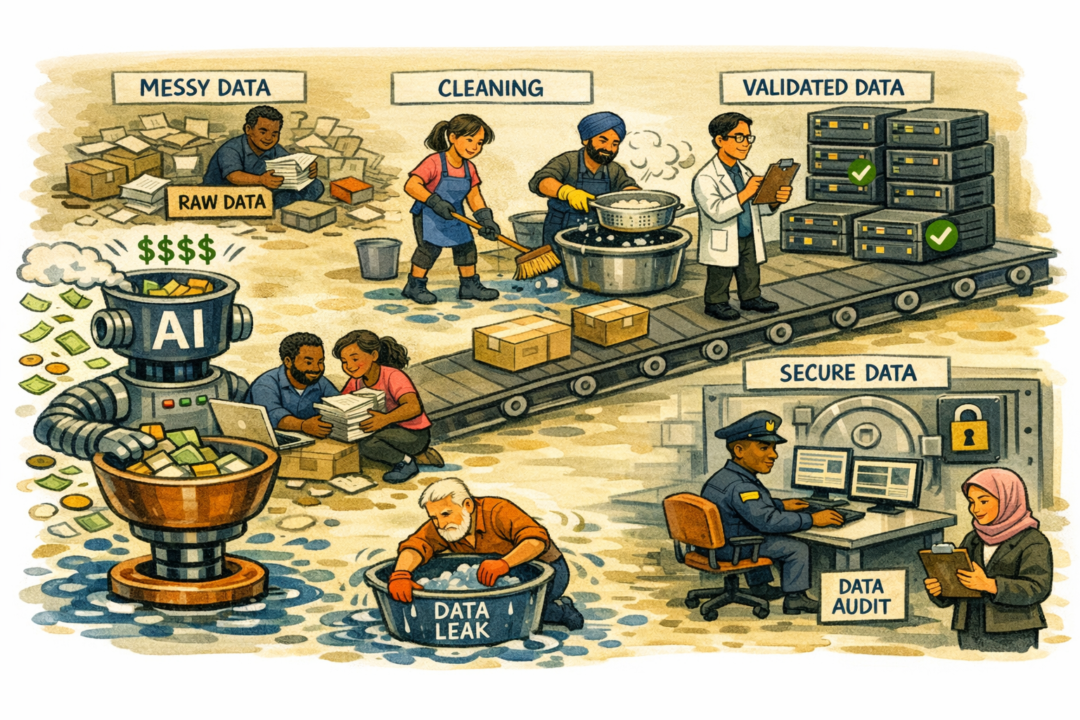

The hidden costs of common ways to address data issues

If you would record and maintain your data ‘correct’, it will be optimal for adopting AI. The question is: what is correct? It means the data represents the (business) event it records correctly, and people know what the data means when using it, or that an automated process using that data performs adequately, within its defined boundaries.

But if you do not maintain your data correct, there are a lot of (hidden) costs:

- It takes effort to rectify the automated or human process that uses the data when it gets out of bounds, or you might even lose money because of the process going wrong.

- You must put effort in inspecting why the process went out of bounds in the first place.

- You schedule meetings about what to do to rectify the problem and how to prevent this to happen again.

- You will have to put effort into rectification.

- You will have to put effort into prevention.

So far, so good. Sounds like a PDCA cycle. The prevention point is where it gets interesting.

What happens a lot, instead of rectifying the recording process, is:

- We build secondary systems and data quality processes to signal outliers in the data.

- We define, build and maintain those quality processes, often in a disjointed data department.

- We have meetings across teams monitoring the outcomes of the quality processes, communicating the meaning of the outcomes.

- We put a lot of effort in getting on the same page: do we understand why the data is out of bounds, what it means, what the consequences are?

- We set up governance processes to make sure the right people address the outcomes of data quality monitoring processes, because the ones building and inspecting the quality processes are not the people who maintain the recording process.

This would be already enough to make you frown if it were a single dataset, but this happens to a lot of disjointed datasets in an organisation, which we integrate later in a business line or process.

The impact of these habits on adopting AI

What happens if we consider employing AI to use that data, with is governed according to this multi-tier PDCA cycle, and run the business process end to end, responding to events during the process as well?

Without giving you a calculation, I think we can agree that maintaining two, three or more sets of the same data in various states of quality adds significant costs to running those AI processes, as opposed to maintaining one consistent set of that data. Worse, you will even incur additional costs to supervise the outcome of AI powered business processes, since you are forced to put additional governance in place across your scattered data management processes.

I think it is safe to state that shaky data foundations will multiply its associated costs exponentially throughout the process. It is a snowballing effect. So, what to do?

Gripping the bull by the horns

The winning organisations will be ones who get a grip on data at the time of recording: validated, unambiguous, complete, fit for purpose. That is step one, and it is hard to achieve in the first place. We need to redesign about every business process and re-implement our systems of record. Ouch.

But that’s not even enough. If you are going to give a shot at this, then organize your business processes along the data path. Right now, we have organized it in the standard hierarchal functional breakdown. A remnant of the industrial revolution and its task optimization operating mode.

The data path is crossing those hierarchical silos. What is now your disjointed or supporting data team IS your business team in the age of AI. Or rather: data management is becoming part of your regular business team.

There are not that many organisations who act on this. Partly because the skill of data management is hard to find. Partly because old habits are hard to shake. They have served us so well and a lot of people have invested their income security in jobs needed to run the hierarchical, function divided organisation. The industrial revolution started 2,5 centuries ago and we slowly evolved and optimized our practices with technological and economic progress.

We have tried many times to reorganise our data governance structures within that hierarchical setup. The Information Model of the 70s, the business data warehouses of the 80s and 90s, Semantic Web / Semantic Models, the Big Data era, and more recently Data Mesh (the original paper, not the technological slurry that has suffocated the initiative). It all has failed and will fail.

I don’t buy hypes, but it is clear to me that reorganizing along data paths is a necessary condition for AI to be deployed successfully.

We can only, in hindsight, call AI a new industrial revolution if this will force organisations or supply chains to restructure themselves, because the costs of doing things the way we used to are getting too high to stay in business. It is just a matter of economics in the end. Time will tell.